Real-Time Analytics Summit 2023 brought together an impressive collection of industry-leading developers, architects of the world’s fastest streaming analytics databases, and representatives of all the essential real-time data technologies. It was an assembly of industry leaders and innovators, all gathered in one place to advance the state of the art. Here is your on-the-ground reporting straight from the halls of the Hotel Nikko, San Francisco, where the event was held recently, April 25th and 26th, 2023.

Kishore’s Keynote: The Rise of Real-Time Analytics

The event kicked off with a keynote from StarTree founder and CEO Kishore Gopalakrishna. He noted three critical dimensions that are required to move from historical Online Analytical Processing (OLAP) systems, such as batch-oriented data warehouses, to real-time analytics. Data freshness, low latency, and high concurrency. It requires new, purpose-built databases to capture data in real time from event streaming systems, index it quickly, and make it immediately available for querying. Plus, they need flexibility and scalability to support magnitudes greater queries per second (QPS). These were the factors that gave rise to such databases as Apache Pinot™.

You can watch Kishore’s full keynote now:

Gwen Shapira: Modern SaaS is Real-Time SaaS

Gwen Shapira, co-founder and Chief Product Officer at Nile, was the headline speaker on the second day of Real-Time Analytics Summit. The central premise of Gwen’s talk is that “The real value of real-time data lies outside the C-suite, in the hands of users who need real-time data for real-time decisions and action.” As Gwen pointed out, “Business strategy doesn’t change every 20 milliseconds,” but apps that empower line workers, front-line managers, and customers greatly benefit from real-time data. Gwen emphasized the need for data freshness so that users can make critical decisions throughout the day. Note: data freshness is not the same thing as a low-latency, responsive UI. What if your app renders quickly, but all the data presented is hours or days out-of-date?

You can watch Gwen’s full keynote presentation here:

Panel Discussion: Microsoft, LinkedIn, Cisco, and DoorDash Weigh In

The second day of the Summit also saw StarTree’s VP of Developer Relations, Tim Berglund, lead a lively and insightful panel discussion between four powerhouse leaders in real-time analytics:

- Dipti Borkar, VP and GM, Microsoft

- Kapil Surlaker, VP of Engineering, LinkedIn

- Sachin Joshi, Sr Director of Engineering, Cisco Webex

- Sudhir Tonse, Director of Engineering, DoorDash

They each spoke about the historic shifts real-time analytics allowed in their organizations. Kapil (LinkedIn) noted that a new type of database was required to run these workloads: “There’s no way you’re going to run that level of QPS from a data warehouse. Search kind of worked but wasn’t built for this.” This was what led them to invent Apache Pinot internally. The value was only obvious after the new database was available. But it allowed LinkedIn to do things that were never possible before.

From left to right: Tim Berglund, VP of Developer Relations, StarTree; Dipti Borkar, VP and GM, Microsoft; Kapil Surlaker, VP of Engineering, LinkedIn; Sachin Joshi, Sr Director of Engineering, Cisco Webex; Sudhir Tonse, Director of Engineering, DoorDash

Sachin (Cisco) talked about how the pandemic drove the growth for Cisco Webex. Suddenly it wasn’t just the service admins; it was HR department heads and CxOs that wanted to know what features were used and how much time was spent in-app. And it all needed live troubleshooting.

Sudhir (DoorDash) dove into the convergence of multiple waves of advances: how the adoption of microservices, public cloud services, and on-demand consumer services all drove the need for real-time data analytics.

Dipti (Microsoft) observed the move from batch, nightly updates to real-time was the drive to derive value out of data, plus the trend towards “Saas-ification.” While much began in open source, it’s become mainstream today, and noted the massive scale of Azure Analytics. She emphasized how Microsoft “dogfoods” and uses real-time analytics for telemetry and logging.

You can watch the full video here:

Real-Time Analytics Databases Compared

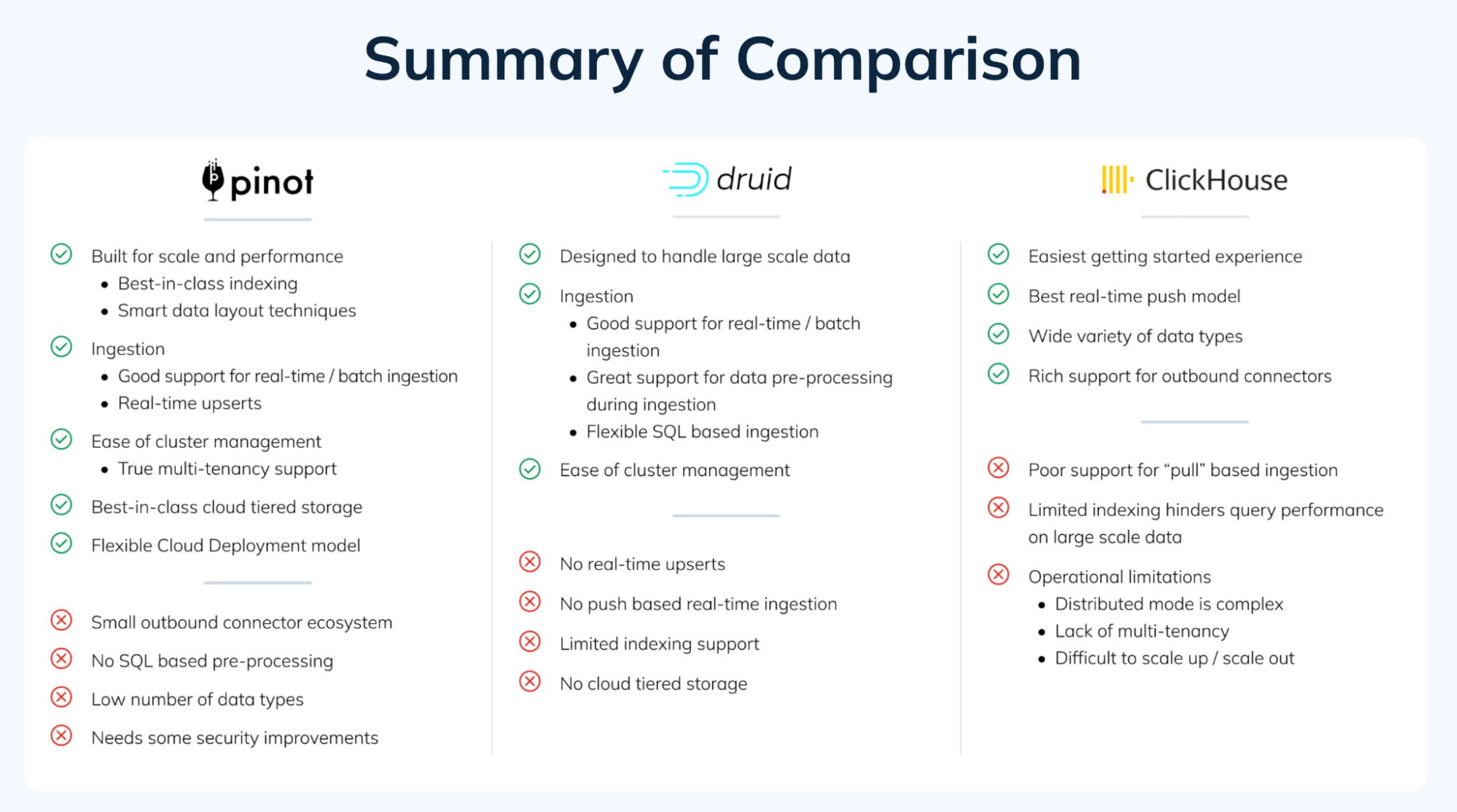

Real-Time Analytics is not a single-vendor driven industry. In fact, it is an industry primarily driven by a rich and diverse array of open source software options, including Apache Druid, Apache Pinot, and Clickhouse. To that end, A Tale of Three Real-Time OLAP Databases was a session comparing these three leading databases. Presented by StarTree’s Chinmay Soman and Neha Pawar, the goal was to objectively assess the current state of the art, clarify and objectively compare each respective database’s capabilities, and help guide users to the right real-time analytics database for their use case.

This talk was accompanied by a related blog, which you can read in full: A Tale of Three Real-Time OLAP Databases: Apache Pinot, Apache Druid, and ClickHouse

Apache Pinot-Related Talks

Real-Time Analytics Summit was a great showcase for Apache Pinot. It included technical deep dives, discussions of the latest features and capabilities, real-world implementations, use cases, and lessons learned. Here’s a list of the Apache Pinot related talks. In due time we’ll make recordings of these available on-demand:

- Query Processing Resiliency in Pinot — LinkedIn’s Vivek Iyer and Jia Guo shared how they worked to make Apache Pinot more robust by adding two new capabilities: first, Adaptive Server Selection at the Pinot broker to route queries intelligently to the best available servers, and second, RunTime Query Killing to protect the Pinot servers from crashing. I captured this deep technical dive live in this thread on Twitter.

- Unlock the value of your data with Apache Pinot and AWS — AWS Solutions Architects JP Santana and Wahab Syed discussed how users can integrate Apache Pinot better into their existing AWS ecosystems.

- Leveraging Pinot’s Plugin Architecture to Build a Unified Stream Decoder — DoorDash’s Mike Davis showed how the food delivery giant created Iguazu, their in-house stream processing framework with Apache Pinot by developing a custom StreamMessageDecoder implementation incorporating the Iguazu consumer library.

- Gently Down the Stream with Apache Pinot — StarTree’s own Navina Ramesh explored how powerful real-time ingestion features in Apache Pinot can almost (but not quite) eliminate the need for stream processing pipelines. She also took a peek into the real-time engine at the heart of Apache Pinot, including its stream consumer, decoder, indexer, pipeline transformer, and upsert manager. Last, she looked at transform functions and ingestion transforms available in Apache Pinot.

- Minions to The Rescue—Tackling Complex Operations in Apache Pinot — StarTree’s Haitao Zhang and Xiaobing Li discussed the purpose and implementation of the Minion component in Apache Pinot. In brief, they are standby helpers, built on top of Apache Helix, that can improve certain mundane, generic, or even complex tasks such as file ingestion, segment refresh, time or value partitioning, or data merges or splits. My Twitter thread gives a brief glimpse into their talk here.

- Hydrating Real-Time Data in Pinot with Fast Batch Queries on Trino — Starburst’s Elon Azoulay and Martin Traverso showed how to combine Trino, the distributed SQL query engine, with Apache Pinot. It also went into the recent updates in the Trino-Pinot connector.

- Building a Real-Time IoT Application with Apache Pulsar and Apache Pinot — Cloudera’s Tim Spann (pictured) is always a great presenter for users looking to get hands-on, how-to practical guidance. His live demo took attendees step-by-step through getting IoT thermal sensor data first into Apache Pulsar event streams, and then into Apache Pinot for analysis via the pinot-pulsar stream ingestion connector. You can read his detailed blog here and check out the GitHub repo here.

- Backfill Upsert Table Via Flink/Pinot Connector — Uber’s Yupeng Fu shared how the transportation leader decided to deal once and for all with the real-world pain of backfilling upsert data. The challenge is that upserts tend to be on a live table, and backfilling is traditionally a batch task. So what’s the best way to tackle this?

StarTree Cloud Talks

StarTree Cloud, powered by Apache Pinot, also includes additional capabilities. Two sessions delved into specific features of our fully managed cloud Database-as-a-Service (DBaaS):

- Improving Customer Satisfaction with Automated Monitoring and Anomaly Detection — StarTree ThirdEye is our anomaly detection and root cause analysis suite. StarTree’s Madhumita Mantri and Just Eat Takeaway’s Leon Graveland guided users through its purpose, design, and algorithms, providing practical examples and best practices. You can read the Twitter thread on the talk here.

- Get Your Data into Apache Pinot Faster with StarTree’s Data Manager — StarTree’s Tim Santos and Seughyun Lee took users through the capabilities of StarTree Data Manager and how it makes the data modeling, ingestion, and validation workflow process easy. You can also read my related Twitter thread here.

More Industry Talks

- Elementary Analytics with Kafka Streams — Confluent’s Anna McDonald showed how data format translations could be performed via Kafka Streams, employing methods like windowing, aggregate functions, and continuous to discrete mappings.

- Upgrading Log-Analytics Clusters to OpenSearch — Logz’s Amitai Stern showed why and how they made the move from ElasticSearch to OpenSearch. The audience learned how they were able to “change tires on a moving bus.”

- Deeply Declarative Data Pipelines — LinkedIn’s Ryanne Dolan showed how to combine the power of Flink and Kubernetes. His low-code, declarative approach showed how to practically deploy stream processing simply via SQL and YAML. Check the Twitter thread for his talk here.

- Role Based Access Control in Real-Time Streaming Data: What, Why and How — DeltaStream founder Hojjat Jafarpour presented a novel approach to applying Role Based Access Control (RBAC) to event streaming, and how to overcome current common shortcomings in existing solutions.

- Introducing Quix Streams—a Python-Kafka Library for Data-Intensive Workloads — McLaren is renowned for speed and peak performance, whether in Formula 1 racing or in the analysis of the “firehose of data” coming from IoT sensors attached to those cars. Discover how they developed and why they open sourced their solution, Quix Streams.

- Calculating the Value of Pie: Real-Time Survey Analysis With Apache Kafka® — Confluent’s Danica Fine showed how to create a real-time bot to watch Telegram, input messages into Kafka topics, and analyze results with ksqlDB.

- Streaming Aggregation of Cloud Scale Telemetry — Confluent’s Shay Lin showed how the event streaming giant manages to ingest 5 million raw telemetry metrics per second, and why they switched to a push-based model for their analytics, based on Kafka Streams and Apache Druid.

Thank You to Everyone!

An event like Real-Time Analytics Summit takes a lot of work in preparation, content review, behind the scenes, and on stage. To all of our sponsors, speakers, reviewers, StarTree staff, and attendees, I wanted to thank you all for making the 2023 event so successful. Look forward to more on-demand videos in the coming weeks ahead and more blog posts that will provide deeper dives into the topics presented in the individual sessions.